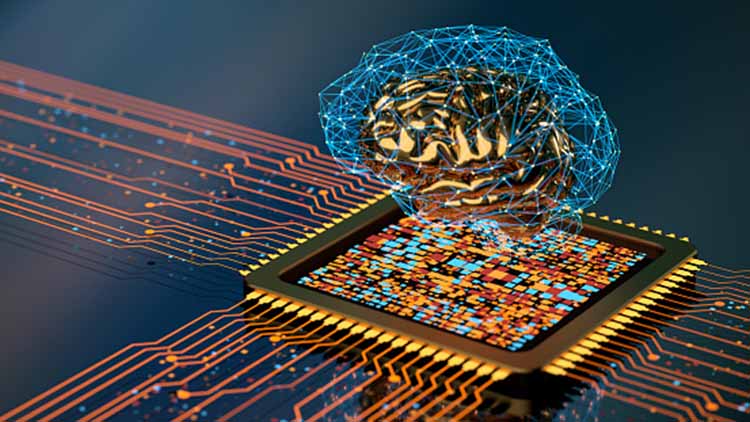

In the age of “Smart Everything,” when all gadgets are becoming more intelligent and networked. The age-old dilemma of “What came first, the chicken or the egg?” is well known. As youngsters, a lot of us enjoyed playing with it. This kind of puzzle tests your knowledge of what led to what. It’s easy to get confused thinking about which came first, the chicken or the egg, but here we’ll focus on a more modern and technological take on the question: “What came first: AI or chips that accelerate AI?” This appears like a trick question at first. The use of algorithms in artificial intelligence is not new. The first studies in the area were conducted in the 1950s, long before chips were available to speed up AI algorithms. That is, before there were chips.

Modern hardware systems are growing increasingly complicated, making it more challenging to quickly and efficiently explore the design space for improved implementations due to the increased demand for computing resources. The application of decision intelligence in integrated circuit (IC) design processes has grown over the last several years in an effort to go through the design solution space more methodically and intelligently, shorten turnaround times, and improve IC design performance.

We have been working on an AI and machine learning (ML) driven IC design orchestration project to solve these difficulties:

- Make the IC design environment available on our hybrid cloud platform, allowing us to quickly scale up or down the workloads in response to the computing needs.

- Generate better outcomes in a shorter amount of time overall.

Through the use of our AI- and ML-driven automated parameter tweaking capabilities, the end result is a scalable execution of the IC design workload that yields designs with greater performance.

The semiconductor sector is one that might stand to benefit significantly from the use of artificial intelligence. How can firms that make semiconductors use AI on a large scale and yet retain their value?

At every stage of a semiconductor company’s business operations—from research and chip design to manufacturing and sales—artificial intelligence and machine learning (AI and ML) have the potential to provide enormous amounts of commercial value for the company.

Artificial intelligence is now being used in a variety of novel applications, including recognizing abnormalities in the transfer of data for the purpose of enhancing data security as well as boosting performance and lowering power consumption in a broad range of end devices.

Emerging applications highlight how this skill may be utilized in various ways than what most people are accustomed to seeing it used for, which is to differentiate between cats and dogs via the use of machine learning and deep learning. For example, data prioritizing and partitioning may be used to improve the power consumption and performance of a chip or system without the need for any involvement from a human being. And many flavors of artificial intelligence may be employed throughout the design and production processes to identify faults and problems that humans cannot. However, the addition of all of these additional components and functions makes the design of chips more difficult, even at more advanced nodes, since probabilities have begun to replace replies with a fixed value and the number of variables has grown.

“As you move AI out to the edge, the edge starts to look like the data center”.

AI everywhere.

This is all a pretty huge thing for semiconductor makers. Precedence Research’s most recent research predicts that the global AI industry would increase from $87 billion in 2021 to more than $1.6 trillion by 2030. That covers both data centers and edge devices, but the rate of increase is rapid. In fact, AI is now such a popular topic that almost all of the top IT companies are either investing in or producing AI chips. Apple, AMD, Arm, Baidu, Google, Graph core, Huawei, IBM, Intel, Meta, NVIDIA, Qualcomm, Samsung, and TSMC are a few of them. The list is endless.

Five years ago, this industry hardly existed, and ten years ago, the majority of businesses were considering high-speed gateways and cloud computing. It is necessary to design structures around the input, processing, transfer, and storage of the increasing amount of data created by new devices with more sensors, including vehicles, smart phones, and even appliances with some degree of intelligence built in. That may occur on several levels.

AI is quickly becoming into a potent tool to support human chip engineers in the very difficult work of semiconductor design. According to Deloitte Global, the top semiconductor businesses in the world will spend US$300 million on internal and external AI tools for developing chips in 2023. Over the following four years, that amount will increase by 20% yearly to reach US$500 million. In light of the estimated US$660 billion worldwide semiconductor industry in 2023, that amount may not seem like much, but it is important due to the disproportionately high return on investment. Chipmakers are able to stretch the limits of Moore’s law, save money and time, solve the talent crisis, and even bring outdated chip designs into the present era thanks to AI design tools. These techniques may simultaneously improve supply chain security and assist in preventing the subsequent chip scarcity. To put it another way, even if a single-seat license for the AI software tools needed to build a semiconductor may only cost a few tens of thousands of dollars, the chips created by such tools may be worth hundreds of billions of dollars.

Time is money: Advanced AI exponentially speeds up chip design

Electronic design automation (EDA) providers have produced tools for chip design for many years; according to Deloitte insights, this market will be worth more than US$10 billion in 2022 and expand at a rate of roughly 8% per year. EDA technologies often employ physics modelling and rule-based systems to assist human engineers in designing and validating semiconductors. Even the most basic AI has been adopted by some. However, during the last year, the biggest EDA firms have begun to provide sophisticated AI-powered tools, while chipmakers and tech firms have created their own in-house AI design tools. These cutting-edge instruments are not only tests. They are used in the real world in a variety of chip designs that are probably worth billions of dollars per year. Chipmakers now have far more powerful design skills because to their complementing strengths in speed and cost-effectiveness, even if they won’t replace human designers.

Advanced AI technologies may assess human designs by discovering placement flaws that increase power consumption, hinder performance, or waste space, then replicating and evaluating those changes. These tools enhance PPA based on previous versions. Advanced AI can accomplish this automatically, yielding greater PPAs than human designers using typical EDA tools—and often in hours with a single design engineer compared to weeks or months with a team.

GNNs and RLs provide PPAs with performance equivalent to or better than expert designers’, utilizing fewer human engineers and in less time. Real-world outcomes:

- MIT’s AI tool developed circuit designs that were 2.3 times more energy-efficient than human-designed circuits.

- MediaTek used AI tools to trim a key processor component’s size by 5% and reduce power consumption by 6%.

- Cadence improved a 5 nm mobile chip’s performance by 14% and reduced its power consumption by 3%, using AI plus a single engineer for 10 days instead of 10 engineers for several months.

- Alphabet consistently produces chip floor plans that exceed experienced human designers in PPA metrics in six hours instead of weeks and months.

- NVIDIA used its RL tool to design circuits 25% smaller than those designed by humans using today’s EDA tools, with similar performance.

- Cadence has announced artificial intelligence driven verification applications that use ‘big data’ to “optimize verification workloads, boost coverage and accelerate root cause analysis of bugs”, according to the company.

- Google is using AI to design its next generation of AI chips more quickly than humans can.

- SAMSUNG IS USING artificial intelligence to automate the insanely complex and subtle process of designing cutting-edge computer chips.

AI looks to be altering how semiconductors are created throughout the business. Google disclosed using AI to organize the parts on the Tensor chips it uses to train and operate AI systems in its data centers in a research paper that was released in June. The Pixel 6 from Google will be equipped with a unique processor made by Samsung. If AI had a role in the development of the smartphone processor, a Google spokeswoman would not comment.

Even at advanced nodes, major chipmakers and designers are leveraging the most recent AI to create semiconductors today. In fact, certain chips are becoming so sophisticated that sophisticated AI could soon be necessary. For instance, the greatest chip design from Synopsys has 400,000 AI-optimized cores and more than 1.2 trillion transistors.

The addressable market is growing as advanced AI is now made accessible via cloud-based EDA services. Once it’s in the cloud, anybody can use it, not just specialists and market leaders, and that includes smaller businesses with less technological expertise and computing capacity.

Agile delivery

Teams should concentrate on commercial value and incremental improvement to prevent AI/ML use cases being caught in a “proof-of-concept” cycle with limited application or scalability.

Agile software development may help semiconductor businesses concentrate. AI/ML research includes rigorous discovery and exploration, but semiconductor businesses need get input from model users. Many agile teams have found success with the vertical-sliver method, which encompasses data intake, modelling, recommendation generation, and distribution to users in the first or second sprint. The vertical-sliver strategy may be contradictory to many traditional procedures, as semiconductor firms only modify production engineering when confident of ideal outcomes.

Agile teams eliminate operational dependency on outsiders. Organizational boundaries between data owners, AI/ML professionals, and IT infrastructure make it difficult to prevent dependence. Agile AI/ML teams are cross-functional and incorporate all essential knowledge, even if some members only participate in a few sprints. Agile teams may self-serve data and infrastructure. The move to agile AI/ML delivery should happen as fast as feasible if senior executives embrace it and firms alter attitudes and practices.

Technology

Successful firms provide a connection layer for real-time access to production and measurement tools, auxiliaries, and facilities. Tool OEMs can assist assure connection, which is crucial for production. In a second piece, we’ll discuss AI/ML tool manufacturers.

Semiconductor firms need a data-integration layer. Before installing analytics engines and use cases, this layer aggregates data. Semiconductor businesses must merge data and use cases from diverse tool providers to avoid complexity and prevent parallel IoT stacks.

Successful organizations will employ edge and cloud computing for AI/ML. Real-time applications generally demand edge-computing capabilities, which deploy AI/ML use cases inside or near the tools. Cloud solutions provide connectivity across fabs, enhancing training data for use cases. Semiconductor businesses are traditionally careful about data security, therefore they may deploy critical data on-premises.

Data

Semiconductor firms have hundreds of tools at each fab, and some produce terabytes of data. To maximize efficacy and efficiency, participants must priorities data that enables numerous use cases, which will have a higher impact than a single endeavor.

Even if participants restrict the quantity of data processed, their AI/ML projects will demand time and resources, such as data engineers on AI/ML teams. Strict data-governance principles guarantee that current and freshly created data are instantly usable, high-quality, and trustworthy. Successful firms have a data-governance team to assure data consistency and quality.

The Future of Using AI in Chip Design

The irony of AI needing faster and better processors while also being utilized to create them is rather amusing. There is a lot of space for improvement, even if AI is unlikely to ever completely replace humans in such challenging activities.

The most intriguing aspect of creating these AI algorithms and neural networks is that it makes individuals consider how they really carry out the activities for which they are designing neural networks.

For example, you don’t necessarily need to be an expert in chip design to develop a neural network that is highly efficient at building a semiconductor, but you must at least comprehend how it is done. This is so that programmers may extract the most significant information and steer the neural networks in the appropriate directions. We now seem to be going quickly in that direction.

Conclusion

AI will be used in a variety of ways by these chips as well as inside them as they continue to develop and expand. That will make it more challenging to design such chips and to guarantee that they perform as anticipated throughout the course of their lifespan, both in terms of functionality and security. Finding the advantages that outweigh the hazards will take some time.

Even while researchers are working to create AI that can mimic the human brain, they are still a long way from creating a machine that is capable of thinking for itself. However, there are several approaches to tailoring these systems for particular use cases and applications, and not all of them need human involvement. As time goes on, that will probably result in more AI being used in more locations to accomplish more tasks, which will create design issues with power, performance, and security that are challenging to anticipate, detect, and finally resolve.